Tuesday, November 25, 2008

Thankful for Family, NI, and Sarcasm

First, of course, I'm want to thank God for all my blessings. Next, I want to thank my family. My Mom and Dad for giving me a good foundation and work ethic and encouraging me to go to collage in spite of their very limited education. I'm thankful for my kids for keeping me grounded through a bunch of tough years and for putting up with me and my warped sense of humor the rest of the time. And I'm even thankful for my hard driving have-to-be-right brother and sweet sister.

I'm Thankful for my really good friends who helped me get past my shyness, helping with my introversion, and helping me get through my tough years. They are fun to hang out with when I don't have a date. In other words, we always hang out.

I'm also thankful to National Instruments for making it easy to do my job. LabWindows CVI is a great tool for Test Engineers and makes it easy to develop code for test sets and for easy to use hardware. I'm also thankful for all the helpful people I've met and worked with there at NI like Joel, Wendy, Santiago, and Conan. I especially thankful for NI Week and the Wednesday night party they throw, maybe happy they do it than actually "thankful". And, yes, I'm even thankful for LabVIEW, it's a good language and most likely a piece of the future of software.

I'm also thankful to both of the Directors I work for, especially JT. They believe in me and know that I'll work hard to do a good job for both of them.

[Start Sarcastic Voice]

I'm also thankful for the boss who said I couldn't follow a process. Now that I've helped deveop our companies processes and reach CMMI level 5, you proved how well you know people. To the same boss who said I would never work in software at our company again. She was right, I'm not in the software group, I'm Test Engineering doing much more and fun software.

I'm thankful to Electrical Engineers. Since most of you guys don't think I can tell the difference between a resistor and an FPGA, you make it very easy to impress you. Especially, to they lead EE guys who couldn't find the problem between the IMU and GPU on LOSAT. You made it easy for "Just a software guy" to find the 25ns glitch that was reseting the IMU with just a schematic and an OScope.

I'm also thankful to the boss who believes that software is just a passing fad. You make everyone else seem so much smarter.

I'm also thankful to the place I work. [insert almost any sarcastic comment from Dilbert and it applies. I'm pretty sure he works where I work]

[End Sarcastic Voice]

The reality of it is I have a lot to be thankful for and I know that I am truly blessed.

Thank you

Sunday, November 23, 2008

NI Help

Lately I've been integrating some Agilent RF equipment into a test set. I now have a much better appreciation of NI help. I've always felt like NI has some of the best technical help around, examples, and documentation but I've come to appreciate it even more.

I'm trying to use TestStand to control an automated test on an RF Signal Generator, RF Power Meter, and an RF Switch, all from Agilent but having trouble. Agilent really is trying to help but still have a really long way to go to get close to NI help. When I had problems I entered a couple of on-line help requests but received no responses. I had to call the local sales rep to get the e-mail of a tech rep. I have been e-mailing him and he has been helpful in a limited way. I did get the manual web sequence to control the RF Switch, but for automated tests, that doesn't mater.

The RF signal generator was easy because I've used it before and figured out it's quirks. The examples for the RF Power meter had nothing to do with actually reading power, hmmm. When I made calls to the driver functions, they came back with error messages that gave no hint at what was needed. The funcion panel help gave no hint at what the parameters were looking for. I wound up using some low level SCPI commands in combination with the driver functions. I couldn't get it to work with all SCPI or all driver functions.

As for the RF Switch, the examples seem to include calls to driver functions for many different switch types. For my RF switch, the driver functions generate a lot of "Not supported" error messages. But it was very hard to figure out what is supported or what calls I could make. Also, once I loaded the Agilent IO Drivers (which are required for the system to even see their switch) the Pickering switches disappeared from the PXI chassis, at least from a logical standpoint. I still haven't figured that one out.

It's been a week and a half trying to get the RF interconnections to work. I think I'm close but compared to the NI instruments, it's taken way to long.

Using Agilent instruments has given me more of an appreciation for how good NI help, discussion boards, phone help, and e-mail help really are. I especially want to thank Joel Garner, NI Sales Engineer extraordinaire, for his help.

Sunday, November 9, 2008

Misunderstood

It seems what Test Engineering does is a mystery to the other groups. We are called in late in projects because Project Managers don't know why they need us until they realize they need to make more than one widget (or whatever it is they're making). Then they realize their widget (or whatever it is they're building) was designed in a way that makes it incredibly hard to test.

It's too late but that's when they figure out they needed us to begin with.

We're trying to educate the programs on what we can do for them. We can do is:

- We do hardware and we do software.

- We develop systems (Test Systems)

- We can help them design their widget so it can be tested.

- We can make their tests automatic and repeatable.

- We can help with developmental testing

- We can make sure the widget is built correctly

- We can test your system in the field or on the production line

...Bottom line is we can help.

Friday, October 10, 2008

CVI Rules!

I finally got away from doing documentation and processes for a day, so I was able to do some fun stuff...Technical work. I'm currently working on a Test Station Self Test. Some parts of the Self Test are done in LabVIEW, partially because others did those parts with LabVIEW, partially because I broke down and wrote some LabVIEW code.

Well, I'm updating all the LabVIEW code to fit it into TestStand for the Self Test. It's frustrating, hard to find the vi's I need and it does things for me...I'm not sure what...that I don't think I want it to. For example, I opened one of the vi's to see the picture on one of the DAQmx self test vi it contained (in order to find it's equivalent for NI Sync) with out changing a thing. When I closed the vi, with no changes, it asked me if I wanted to save. I didn't change anything! What was different where it needed to change?

As I was working in LabVIEW, it took quite a while to update the code, but I was learning. I was figuring out what the microscopic pictures were on the vi's, although I still have no idea why they chose the pictures they did for some of them. Things were moving along smoothly with only a low constant pain in my wrist. I was finally done with the LabVIEW part.

Then I needed to develop some CVI instrument wrappers. And BAM! They were done! The text based code just flowed from my finger tips. I plugged it into TestStand and BAM BAM! It worked...first try! And I wasn't even entirely sure how the RF Signal Generator worked. Compared to the struggle I had getting the LabVIEW stuff out, it was a breeze. It was like night and day! It was slicker than deer guts on a door knob! (A good old southern saying)

All I could say was CVI Rules! and LabVIEW drools!

Tuesday, September 23, 2008

Music and Teams

There were several points that seemed counter intuitive about teams until you realize that Pink Floyd produced many great albums (for the young people, an album is a vinyl disc that music was recorded on and played on a record player...with a needle) including The Dark side of the Moon, one of the best selling albums of all time.

First point, hiring people based on best team fit is over rated. Sometimes the band members were at each others throats. The point was they were all smart, all creative, and they had the drive to get the job done. They didn't let creative differences or personal difference get in the way of there goal.

Second point, some teams fall apart with dissension between team members, some teams refocus their experiences into creativity. Pink Floyd re-focused into writing great music while software teams can refocus into great software.

Third point, Creative people push themselves, and others, hard. Creative people will push themselves to bring their idea's to the forefront and sometimes will push others to get their idea's out there. They seem to push each other, sometimes in not so nice ways, to get people to complete there own ideas.

Fourth point, don't let technology overwhelm the ideas. This one may be a stretch but it has a good point. With Pink Floyd all the technology behind the shows sometimes got in the way of the music. Today, all the e-mail, IM, blogs, distract us from reaching the goal of developing our ideas.

Overall, the parts that I read were very enjoyable. I'm going to have to go back to the bookstore, hangout some more, and read the book. Or, hey, I could actually buy the book! We'll have to see.

Sunday, September 14, 2008

LabVIEWism

I've noticed some traits of LabVIEW programmers and the word "LabVIEWism" came to mind. Sort of like Hinduism or materialism. It seems to be more than just a programming language, it's seems to be a way of life...programming life that is. Similar to Protestantism is to Protestants or Communism is to Communist. (No association with LabVIEW implied)

To me, it seems that LabVIEW is ingrained in the LabVIEWist being, it flows in their blood. You cut them and they bleed hemoglobin vi's. (I'm sure the icons are red)

LabVIEWism has a lot of followers that are dedicated to the LabVIEW way of programming, focused on converting us lowly CVI programmers. NI Week is a tribute to LabVIEWism, they inundate everyone with LabVIEW with only a token acknowledgment to CVI.

I don't bleed vi's, or dream graphical code. I still dream in C and preach the virtues the code editor and the power of the command line. LabVIEWism may take over our companies test group, but there will always be holdouts.

Sunday, September 7, 2008

LabVIEW...

But continuing to work with the pain, my left hand is just not as dexterous as my right. It's hard to hit the small wire Connections. It's annoying trying to figure out which vi to use for what functions.

Some of the features that would be VERY helpful to get us LabVIEW handicapped people working in LabVIEW is:

- a zoom feature. I could make a vi bigger and actually hit the terminal.

- Or have it where, when I miss hitting a terminal, I can use tab or shift tab to move the connection from the current terminal to the next or previous terminal.

- Have LabVIEW be able to select the end of a wire and then drag it around using the arrow keys instead of the mouse.

- Have the pallet navigable with the arrow and tab keys. Make it where you can select the pallet, then with the arrow and tab keys move about in the pallets to the vi you want. From there, be able to select it and drag and drop with the arrow keys.

Have the LabVIEW developers ever heard about Test Driven Development (TDD) or automated Builds? Here's some more features.

- For automatic builds, have VI's able to be Compiled (or whatever happens to them) from the command line and verified none of them are broken (with the broken arrow for the run button)

- For Test Driven Development, have an automated test frame work. Frame works similar to the NUnit test development suite.

One more suggestion...have a cheaper copy for use by anyone. MS have copies of things like Visual Studio C++ for $100 or so at computer stores.

It's still easier to think in C (LabWindows CVI) than it is in LabView and until I can develop LabVIEW as I can develop C code, I'm sure I'll still be using CVI. But I will always keep trying to learn new stuff.

Now as soon as NI comes up comes up with telepathic programming interface, I'll be there!

Sunday, August 24, 2008

I've been working on processes and metrics for developing test sets for quite a while now (a couple of years). I love doing technical work so it has been tough doing mainly documentation. I have worked on some test programs part time while working on the processes, so it hasn't been totally tortuous.

I believe there is always a process of one type or another in developing test stations. The problem is some times the process is the POOMA (Pull Out Of My A**) process or maybe the "wing it" process. But then there's the other end of the process universe. This is where the process makes it nearly impossible to do a small project. Then, in the middle, there's the agile process which is suppose to be the processes that allow tasks to be done quickly and with the ability to easily adapt to changes. It's still not a cure all.

Even people who say they have no process, have a process, it's just not as straight forward as most or even seems coherent.

After working on the processes for a while I know that one process does not fit all projects even though that's what we try to do. I was suppose to document the process we go through to build test stations. Each project has been unique in it's own way, with some process steps that aren't in the process and skipping other steps that are in the process. Some projects that try to follow the process finds that outside groups don't follow the process which makes our process fail. There's many reason's process fail.

If the processes aren't working (not that people just don't want to do it), they need to be adjusted , in real-time rather than going through the year long process change process.

The project leads, the one's actually have to make the process happen, have to be the ones to adjust the process as needed. They need the lead way to adjust it as needed. The Process police also need to understand a single written process is not a panacea, it won't cure all. The processes need to be adjusted.

While I've been working on the processes, this is the dilemma I've been dealing with, having processes that don't imped getting things done but satisfies the Process Police.

Saturday, August 23, 2008

Green gone wrong

As we move into the future, there will be more and more Green Engineers. Scientists will go Green to give the Green Engineers more work to do. Of course, the Green Scientist will become smarter and more powerful. Until one day, the inevitable will happen; There will be a Green Mad Scientist. The Green Mad Scientist will then try to take over the world. The Green Mad Scientists will make Green Monsters that will terrorize humanity, but be good for the environment.

These Green Mad Scientists will start working with Green Artificial Intelligence. The Green Artificial Intelligence will develop more adaptable, more specific, and smarter shades of color Green.

These smart shades of the color Green will become smarter and smarter until, one day...there will be super intelligent shades of the color Green wanting to take over the world.

So beware of Green Engineering and the inevitable super intelligent shades of the color Green.

(With apologizes to Douglas Adams and Tthe Hitchhikers Guide to the Galaxy" and to the Super Intelligent shades of the color Blue. You're super intelligent, but not as super intelligent as the super intelligent shades of the color Green.)

Obligatory mention of NI, LabWindows CVI, Test Engineering, and even LabVIEW. I'll write about you all next week.

Friday, August 8, 2008

What I learned at NIWeek

His talk made me think; It made me think about thinking, about using my brain, about getting things moving in an innovative way, not a new way, but a different, better way.

And that's what I like about National Instruments and NIWeek. Not that there's new products that are Smaller, Better, Faster than the older products. It's about innovation, taking things known, re-combining them, and making something better and getting it out there.

I'm not a LabVIEW fan, I may have said that before, but I am a fan of the innovation of LabVIEW. Dr. James Truchard, Jeff Kodosky, and Bill Nowlin combined the computer, programming, and graphics into LabVIEW, an innovative graphical programming product. It's more than just the Test, the Software, or the Hardware aspects of NI, it's the innovation.

To me, innovation is the whole point of NIWeek, the Innovative thoughts behind the new products. NIWeek inspires me to be innovative, combine old things into new, better things. It get's me thinking along different lines, about more than Test, Software, or Hardware, it's about what can be, about more.

The Experience of NIWeek and the products showcased are great. And it's a good way to get everyone together, to network, and focus on test. But, at least to me, it's much bigger than that. NI Week is like Disney world is to a child. Disney World makes kids imagine what could be. And that's me with NIWeek, I'm imagining what can be.

Now I need to move past the kid in me, and move the imagination to innovation. The next step.

Thursday, August 7, 2008

NI Week - Day 3

First, the NI Week block party was great fun last night. The band was great, the food was great, and everyone there had fun. Whomever didn't go, missed out! A lot of the people wound up on 6th street after that. It was a great night!

It's the last day of NIWeek and I'm sad that it's over. I went to the last of the seminars, I always enjoy learning more. I will admit that my mind is getting tired. Or maybe it's from only getting about 5 hours sleep.

Today, I learned some about TestStand, more about FPGAs, and about DAQmx synchronization. I feel good about everything I learned, especially the FPGAs and DAQ information.

The new NI PXIe5673 is a very cool device since you can use it to generate a test GPS signal. We won't need the Spirent thing to generate a GPS tests anymore.

All in all, it was a great week. It was good to see the NI people that I know, especially Wendy and Santiago. It was good to see Luis was still working hard on CVI, keeping it better than LabVIEW.

But now it's good to be home, see the kids, and I look forward to sleeping in my own bed.

I can't wait until NI Week 2009. I'm planning on going even if it's just on an Expo Pass for a day. Remember, it's only 362 days away!

Wednesday, August 6, 2008

NIWeek - Day 2

Another day of Fun and Frolic at NIWeek. I'm getting a lot of good information again today, but it's not as good as yesterday because there's no CVI sessions. Somehow I'll survive no CVI today...I think.

The keynote started the morning off really well. My favorite news was that NI allows different OSs to run on separate cores. That means you can have RT running on one core and Windows running a User Interface on the other core.

After that it's all a blur of LabVIEW, FPGA, DAQmx, and some TestStand thrown in. There are a lot of really nice features in LabVIEW FPGA that make it really flexible and usable. I learned some about DAQmx data streaming and I got to hear the TestStand roadmap for the next couple of years.

I really beleive NI is doing a really good job of developing new and innovative products. But I think what NI does best is listening to their customers. They do VERY well at asking what the customer wants and showing the customer what is coming up and asking "Is this something you would use". Of course, half the time, we customers don't know what we would use until we actually get to use it.

"The futures so bright I've got to wear shades" to re-use lyrics from a song. NI should be wearing shades!

Tuesday, August 5, 2008

NIWeek - Day 1

What a great day! Keynote this morning was very good. CVI information was great! (see end of the blog) NI Rolled out LabVIEW 8.6 with cool new features that even I may use! The auto complete for LabVIEW VI's is nice and keeps you from mousing through the pallets. Of course you have to know the VIs name so those of us who know "Modulo" rather that "Quotient & Remainder" or with DAQmx where some name differences start around the 30th character may still have some trouble.

I went to a LabVIEW FPGA seminar and it's continuing to evolve and getting more and more powerful.

I went to a seminar on Intelligent control because AI is my hobby. It sounds as if LabVIEW may have some Intelligent control vi's coming out. More details to come.

Joel [Garner] and Travis [I-dont-know-his-last-name] put together a get together talking about new FPGA stuff. It's some good information.

But the highlight of my day was CVI. There was a lot of good CVI information between the CVI round table discussions at lunch, the using multi-core seminar, and the CVI Users group. Wendy [Logan] and Luis [Gomes] did a good job of talking about the future of CVI. They emphasized CVI will be around for a long time to come. But then again, most of us have heard that over and over. Plus they have some good, forward thinking plans for CVI. The only down side was the CVI demo, where they were destroying a CD, was flinging shrapnel and so they wouldn't run it live.

Also, you (yes you!) can sign up to get a beta copy of CVI 9.0 by going to ni.com/beta. Wendy only mentioned it at every slide and two or three on times on some slides.

CVI...Learn it...Love it...Live it!

OK, I'm tired and getting a bit little loopy.

Monday, August 4, 2008

NI Week - Day 0

Tonight was the first night of NI Week. Just the meet and greet and get to know the Expo floor. There was only one CVI display, but of course it was great. They were stress testing CD's by spinning them up until it disintegrates which, of course, is cool! It was Good to see Wendy (Logan) again, Queen of LabWindows CVI. She is energetic as always. And CVI is exciting as always.

It was also good to see Santiago Delgado, king of TestStand. He always amazes me. Tonight he was playing the guitar at a LabVIEW sound demo...and he was VERY good.

I did get to go to 6th Street, heard some good music and drank some beer. There were a lot of obvious NIWeek participants out tonight (Nerds). I'm excited about Tuesday, the first full day of NI Week...at least for me.

To everyone not here, your missing some good stuff!

Sunday, August 3, 2008

NI Week! Nerdvana

Nerdvana - State of total geekdom.

It's NI Week, I'm in Bastrop with a friend and I'll move over to Austin tomorrow. I'm very excited about the start of NIWeek, I will be in Nerdvana!

Most of my friends think this is nerdy, that I'm excited about going, and it may be. However, it's a great networking and learning opportunity. They go to their industry own conferences and networking opportunities, but "Market-vana" doesn't sound that good. Most of them are in sales and marketing and, to them, everything is a networking opportunity.

NIWeek is a time to live immerse myself in Test, Software, Hardware, and like minded people. A time to become one with Test, a time to reach...

Nerdvana!

Sunday, July 27, 2008

NI Week - LabVIEW-fest

NI Week is getting close and I'm excited. NI Week is a huge LabVIEW-fest, it's almost all about LabVIEW...LabVIEW presentations, demonstrations, and key-notes. I'm still excited about being at NI Week in spite of that. Luckily there are some LabWindows/CVI presentations and, at least last year, there was a CVI section in the NI display. I want to thank Wendy Logan for her work on the CVI stuff. Here's a link to some of the CVI events.

I'm not a huge LabVIEW fan, mainly because of the mouse use and Carpel Tunnel Syndrome. Recently I realized there were other reasons too. I read a book called "In the beginning was the command line" by Neal Stephenson. It talks some about the history of OSs and about controlling the computers. It made me start thinking about how I use computers...not at work but at home. At work, everything is setup for you, you use the OS, tools, etc that work wants you to use.

At home I use Linux (Ubuntu). I like it because if there is a problem, I can look into it, I can figure it out. I can look under the hood...so-to-speak. With LabVIEW, when one of the Vi's doesn't work, or seems to not work, you have to call NI and let them know and they fix it. It's out of my hands. Whatever is under the hood is proprietary, I'm told. You can't just open a Vi with a text editor or compile it from a command line. I can open a file for a C/C++ or Java program, enter it, compile it and run it. Very simple. LabVIEW introduces another layer of abstraction and I just can't see what's below the layer. I've written (in college) a simple C compiler so I understand it, I'm comfortable with it.

LabVIEW is the language of test, the language of the Future, and will be around a long time. You just can see what's under the hood.

Saturday, July 19, 2008

NI Week - Boondoggle?

Most of them had a chance to go themselves. However, since we're suppose to report on what we learn from NI-Week, most people chose didn't ask to go.

Last year I went and I learned a lot, not just about NI products, but about how other companies do things, equipment is out there, and what services are available. There was a lot of helpful seminar' on many different subjects.

One problem I had last year was I brought back a lot of good information, but then I was indirectly told to do my job like we've always do it. So is it really a boondoggle if you learn and not allowed to use what you learn? I say no.

At least this year I'll get to report on what I learn whether I get to use it or not.

I'm planning on learning:

- What are the future trends in Test Software Development?

- Trends in shrinking Test Set design.

This is where my learning experience starts, but once I get there my focus may change. I also want use the digital video camera to come up with some good man on the street views on these things.

My goal for this year is to go, learn, come back and teach anyone who will listen. Of course I want to network and have fun, too.

Everyone should go, learn, and have fun.

Tuesday, July 15, 2008

NI-Week Seminars

Now you can start planning your learning experiance at NI-Week. I hope to everyone there!!

------------------------------------------------------------------------------

We are working hard to get the NIWeek Final Program onto ni.com/niweek so you can see all the great activity that will be happening at this year's event. It will be the largest NIWeek ever from a technical content perspective with over 240 sessions from which to select.

Until that PDF is available, here are the links that will allow you to see the content online, register if needed and start to build your personal NIWeek schedule.

If you are not registered for NIWeek and want to see the content that will be presented. Go here to View Sessions. This shows you the content but not the dates, times and locations of each session. Only registrants are able to see that information at the link below.

For registrants, you can now go here to Build a Schedule.

See you in 21 days!

Rod

Sunday, July 6, 2008

Great Engineers

Great Engineers...

...know what they are doing, they have a reason for doing what they do and they know why things are happening like they are.

...don't believe things "Just Happen" (corollary to the above) They don't feel comfortable with just using instrument, specific electronics, API's or algorithms. They need to know how they really work.

...understand their business and their customers. Great Engineers know what matters for their customers and their business. They can make trade offs that make the most business sense and for their customer.

...put customers and their team first. No task is below a great engineer and no customer is unimportant.

...have the highest integrity and ethics. They care about how they accomplish their tasks. Great engineers care about their team and their customers and keep integraty and ethics in all of their dealings.

...have excellent people skills and communications skills. Great Engineers work well with others, respect others, and communicate clearly and effectively.

...have a wide support network. Great Engineers have contacts and a network to support them and to allow the engineer to be far more effective and become a great engineer.

There are many other aspects like quality, focus, and design skills but I have to stop typing somewhere.

These soft skills differentiate the great engineers from good engineers. I know I have short coming in some of these area's but I also know I am always trying to improve and hopefully become a Great Engineer.

"Know me for who I am, Revere me for how I got here" - Qwezzen

Wednesday, July 2, 2008

I'm going to NI Week!!

The company I work for has decided to allow me to go to NI-Week. My boss said that anyone who goes will have to give a presentation on "What I learned at NI-Week". Apparently not many wanted to do that, but I'm more than willing to give a presentation to go to NI-Week.

I've also had some e-mail correspondence with a PR person from NI and she said I could check out a USB video camera to record my experience at NI-Week. It will a video for NI but I'm also going to put some of the video here, so I want to get some good footage. I'm looking for any interesting and unique aspects of NI-Week I can find; Or any interesting or unique people I can get on video.

I would also like to meet anyone who reads this and is at NI-Week for the video, too.

I want encourage everyone who is able to go to NI-Week, at least on the free Expo Pass. It's a great experience! Plus Austin is fun, the music on 6th street is great, and UT is there.

Sunday, June 29, 2008

Future ot Test Development

In the farther future, I believe that the development of hardware and software will be more coupled and much more automated. NI, again, is doing well on this. With NI-Scope, NI-DMM, and NI-Switch. When you get an NI-DMM card, any DMM card, and use NI-DMM to deal with it. All that really needs to be known is the basics of what DMM measurements need to be made. It's all drop in from there.

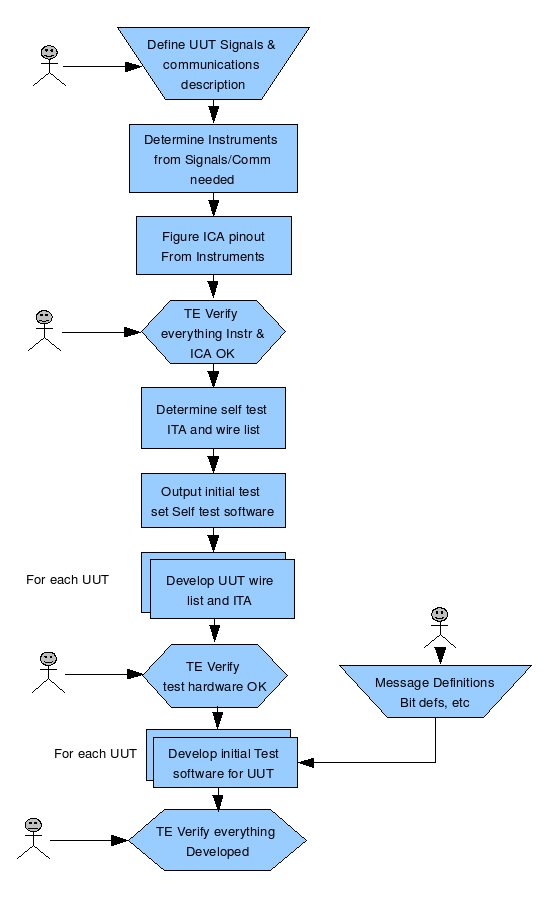

The more distance test development future should bring more automated and integrated development. I believe the Test Engineer will specify what signals, tolerances, and communications channels needed to be tested for each of the UUTs that are to be tested. A test development (TD) tool will select the best instruments for the job and layout the Interconnect panel (ICP) (The ICP is where all signals from all the instruments come out to the outside world) The Test Engineer would verify the instruments are right.

The TD tool would use the signal to be tested and instrument it chose to create an Integration Test Adapter (ITA) to self test the station. (ITA take the signal from the Instrument through the ICA and routes it to cables, switch cards, and other instruments). The wire lists for the ITA fabrication would come from the tool. From the ITA and wire lists the software for a test set self test would be generated.

Then the tool will take Save Nowthat ICA output and the UUT signal specs that we started with and would develop the ITA and wire list for each UUT test. Using the UUT wire lists and ITA a UUT self tests software and UUT test software would be generated.

The switch cards needed to route the signals for the Station Selftest and UUT tests would be added to the design.

Below is a simple flow I threw together.

This whole thing is conjecture on my part and very over simplified but, in general, seems doable. Overall, eventually, there will most likely be a lot more automation of layout and test.

Saturday, June 21, 2008

Future of Test

When a test set or UUT failure occurs the failure information and analysis could be directed to the Test Engineer's pocket PC. He/She would be able to attach to the test set from where ever they are and trouble shoot most problems. Through the remote link some problems would be fixed and some would need to be coordinated with on-site technicians.

As for operating the tests, there could be a central operator planning, scheduling, and controlling all of the tests in a factory. The operator would have the various test screens on his/her screen to interact with the test software. There could be some people on the work floor to make sure everything gets hooked up correctly and the UUT's flow from one manufacture operation to the next.

I do believe wireless and the web will pay a much bigger role in future test. Remote operations will be more common and more advanced failure detection, reporting, and analysis will be the norm.

I also believe that AI will pay a bigger role in test...and not just because it's my hobby.

Friday, June 13, 2008

Future of Test

With all this I keep wondering, what is the Future of test set? Of test software? How much will change before I retire? When can I retire (different topic)? Also, I was doing an install of some software and had some time to contemplate the future.

I know in the future the Engineers creed of "Smaller, Better, Faster" will be in effect. Also the concept of Cheaper is being pushed really hard. So what will happen in the future.

Smaller is easy. We've seen it happen for years in standards like VME going to VXI and to PXI as well as chassis like the compactRIO. With these different architectures we've also seen faster speeds. So in the next 10 years I'm sure there will be a sub-compactRIO or RIO-micro.

I'm pretty sure distributed systems will be more common. A system where there is a central control and information repository with connections to small "brick" testers that can be put just about anywhere, temperature chambers, remote less access able test area's. There will be wireless connections where possible so that even wires aren't needed. Some type of Ethernet (hardwire or wireless) will connect to the brick and the brick will control and communication with the units under test (UUT). All test results and operator interface will back at the central computer. The central computer will control several different tests at one time.

There will be more embedded test programs in the units under test (UUT), there will be less discrete interfaces. Since UUTs being tested are getting smaller (better faster) too, there will be less area for interfaces. Software expertise will be needed to develop Operational Test Programs (OTPs) to load into units to test them.

With distributed test systems and smaller test sets there will be more "soft" instrument front panels on the display screens and less physical instrument front panels. The soft instrument front panels on the screens would give an image of what the real instrument front panel would look like if it were real. They would control the various instruments manually when it's needed and would minimize when the device isn't being used. Touch screens would be great for this function so that the operator would still "touch" on the interface.

Of course, all this are just some possibilities for the future.

Sunday, June 8, 2008

From Hack to Engineer

There are several levels of skill that go along with doing the job of Engineer. A hack (not be be confused with hacker) learns as he goes, acts and then thinks, and cleans up his messes only when he absolutely has to, if he can't pass it on to someone else.

Then theirs the craftsman Engineer. He studies, he plans, uses his best practices and tools and takes pride in his work. But a craftsman is not to the level of Engineer because while, he develops a well crafted product, it lacks predictability and certainty.

Then theirs Engineers. With Engineers it's all about knowing instead of guessing. An Engineer doesn't estimate, he/she calculates. An Engineer doesn't hope, he knows. Estimates can formed based on what they devise. Engineers basically have consistency.

Consistency is what Engineers are about. They still need the hack characteristic of learning as they go and the craftsman characteristic of using best practices and tools.

The best way to get a good product is to have good Engineers working on the product. We also need to encourage and train the hack Engineer to become a Craftsman. We also need to encourage and train the craftsman Engineer to become a real Engineer.

Sunday, June 1, 2008

SQA - a necessary evil

If people are not monitored, at least occasionally, they tend to fall into the bad habits as schedules get tight or work loads increases. The code will degrade, maybe not into Chaos, but to at least crappy code. With a police presences (SQA) to keep the crowd of programmers from moving in the dangerous direction, it remains fun and good code is developed. Without over site, the results would be code chaos (or maybe just bad code). With the software police around, we move in the right direction.

Test Software, at least where I work, is not monitored by SQA like it should be. Typically the SQA people, or whomever sets up their budgets, don't budget enough for TE Software. The mission critical flight software gets the attention while test software is left alone.

Test Software needs to follow some standards, meet some criteria, needs to be verified to a certain level of "goodness", just like every other software. The standards are followed when we know someone is making sure we follow the standards.

The test software lead should demand the Software Quality group to do their job for test engineering, like for the other software groups. This is probably a departure from what most test engineer's want, but if the company wants solid software, then it needs to be done. If the SQA can't do the monitoring of the test software then the Test Engineering lead needs to do at least the basic monitoring. I know the lead doesn't have time but it needs to be done. The goal is good solid test software.

Basically, at the very least, the test lead needs to make the following is done:

Code reviews are done and problems found early

Make sure the requirements are met in the design and again in the code

He needs to make sure at least minimal style is met for reuse ability sake and for debugging.

He needs to make sure the code is checked and verified against the requirements.

Again, the goal is good code, the policing the engineers is how to get there.

Wednesday, May 28, 2008

Peer Reviews

Statistics have shown that Peer Reviews do help if they're done right. (see the book “Best Kept Secrets of Peer Code Reviews” by Jason Cohen.

First, some good reasons to do peer reviews. The best reason is defects found early cost less to fix, simple economics. A second pair of eyes can help. Another reason is that less experienced programmers can learn from more experienced programmers at peer reviews. One note is that typically everybody can learn something from other peoples code.

Second, to shoot down some myths. People have a lot of reasons to not do Peer Reviews. Things like "my code is already good enough". To this I have to ask, have you ever had any problems with your code? If you answered No then you are better than any of the Rock Star programmers that I've heard about. Peer reviews are to get rid of problems before they get out.

How about “We don't have time for peer reviews.” Then how much time do you have to fix problems later? And how much will it cost?

Another myth is that peer reviews don't help. They don't help if you don't do them. They don't help if you don't really review the code. If you find just a few defects it can be worth doing it. My Mom and Dad used to say “Anything worth doing is worth doing right”.

There are alway excuses for not doing peer reviews, but no good reasons.

People have ego problems with peer reviews, too. There's the “Big Brother” effect where programmers feel like the peer review is to monitor their every move. This is not how it should be, it should be about removing defects and not about monitoring.

Earlier I said peer reviews help if they're done right. That means the code is really reviewed, the reviewers read over the code and look for potential problems, makes sure it meets the requirements, and is understandable. If this is done then defects are found and fixed. If the code is just glanced over, fewer defects are found, problems are found later and costs more to fix.

To close there are some truisms about Peer Reviews:

1) Hard code has more defects – the more complex the code, the more potential defects.

2) More time yields more defects – The more time spent reviewing the more defects found

3) It's all about the code – Review the code not the programmer

4) The more defects the better – Defects found early are cheaper to fix

The bottom line is a better product and that's what peer reviews should be all about. Happy reviewing!

Saturday, May 24, 2008

Technical Debt

Here is what I think. For software some items that would cause technical deficit would be issues:

- Hacked together code or code with a lot of short cuts

- Spaghetti code

- Code that is complex or hard to follow

- Incomplete or inadequate error checking.

- Code to be implemented later

- TBD's or incomplete requirements.

- Poor or Incomplete design

- Anything “owed” to the software that is deferred.

I was contemplating technical debt for the rest of test and hardware can most certainly have technical debt, too. Again, it would be anything owed to the hardware. Some of this would be:

- Incomplete schematics

- Unspecified connectors

- Incomplete grounding or shielding

- Incomplete wire lists

- Incomplete wire specifications where they were needed.

- Again, anything “owed” to the hardware

The mechanical portions could have technical debt as well. It would be things like:

- Incompatible of incomplete layout

- Unspecified mechanical connections,

- Other items that are needed but left unspecified.

(I not as much an expert on hardware or mechanical aspects of test but I'm sure a lot of the hardware people can add to these lists.)

The blogs by Steve McConnell on Technical Debt equate it to financial debt because they have a lot of the same issues. Technical debts, as well as financial debts, have to be taken care of at some point in time or they will bite you in the rear end. If they are not the hardware and mechanical technical debt can be disastrous at integration time or when test operators actually use the equipment. The Software technical debts can come back at anytime, like an unpaid bank loan. Some software debts may bite you at integration time, or worst validation time, or even worse, while the equipment is in use on a production line. To keep it in financial terms, this would be like a bankruptcy. You could get past the technical bankruptcy but your reputation could be ruined for a while.

Also, the hardware technical debts can cause the software have a technical deficit if a hardware debt has to be fixed in software. It's like the hardware defaulting on a technical loan co-signed by the software. This seems to happen quite often.

Overall, when technical debt is taken on, it needs to be taken into account for future releases. If there is to much, it can cause a burden on your future of your tests. If it's not taken care of it could bring everything crashing down, potentially during validation or production.

The scary thing is if you don't realize you're taking on technical debt you have bigger problems and your people need to be trained or replaced.

Tuesday, May 20, 2008

NI Week - I'll get there some how!

The reality of it is that I AM going to go on my own, if nothing else on the "Exhibition Only" pass. I live in the Dallas Tx area which is about 3 hours (2 1/2 hours the way I drive) away. I'm planning on leaving one morning, early, with a gallon of coffee in hand, making it there for the opening address that day, seeing the exhibits, then coming back that evening. Simple...right!

I may get a cheap motel and stay one night but I have a while to figure that out.

The bottom line is I'm will be at NI Week, one way or another. This is something I'm passionate about! Even if just for one day. It is an experience that everyone should have. I was fortunate enough to go last year and I'm thankful for that. If I can find the money, I'm going to register for some of the other options, you know, things like food or the sessions for one day. However, as a single Dad with a Son in college and a Daughter about to go to college (UT Austin, near NI), I can't justify much more than the gas for the trip on my own right now.

I would like to blog some more on NI, since I've been pulled off to write processes I haven't had a lot of testing to write about. (In 2009, I'm going to make sure that's not the case!)

I will blog about my road-trip and my experiance at NI Week.

Here's the link to register for NI Week, sign up early. June 1st is the deadline for the Early Bird Special.

Friday, May 16, 2008

The most important tool - Good People

A person who has had software classes on “How” to program and what is a good program and not just coding is much better than the guy who has had one or two classes and thinks he's a programmer. NOTE: I do want say that there a many really good programmers who are self-taught, they understand the what, the how, and the intricacies of how. Theses are the code ninjas (without getting into the pirate vs. ninja discussion) These are the guys that you should really find, but I don't know if they would be willing to work in test.

Failing on finding the code ninjas out there and convincing them test software is the place to be, you need the good software people who loves to operate the hardware. I don't agree with programming tests at interviews but I do believe Test Software people need the skills and knowledge of programming in order to do the job right. Even if they are doing both the software and hardware parts of the job. Programming skills should be a requirement to do test software.

Another aspect of the people portion of the job is “can we all get along”. Engineers are notorious for personality quirks. Major problems can happen if a teams quirks don't mesh. In this world of diversity we're all suppose to be accepting of each other. But sometimes, it just doesn't work. Especially if there's a time crunch or big technical hiccup. So, even with diversity, sometimes teams don't work due to personality conflicts. If there is a toxic personality on the team, it can be just as bad as having all bad engineers on the team. (When I hear Diversity I always think of the Dilbert cartoon where someone says “The longer I work here, Di Verse it gets”, say it out load and you'll get it)

The point I want to make sure I get across is that People are really and truly the most important asset, and are more important than all the tools in the world.

Sunday, May 11, 2008

NI Week is Coming!!

NI Week is getting close!

August 5 - 2008

I went to last years NI week, and it was incredible!! It was a very valuable experience. I would love to go to this years but typically the company I work for won't let the same person go to something like this two years in a row. They tend to want to send different people every year. I'm going to try convince them I should go, I guess we'll see how it goes in the next few weeks.

Below is a link to the preliminary program for this year. By the way, a quote from me is on page 27.

View the NIWeek 2008 Preliminary Program

Testing using simulations

The main reason TE software is different is because a lot of it runs against hardware, it calls hardware drivers to read information from hardware and control the hardware. It does take work but it's testable. Some situations where you don't have hardware to test with, can't induce all the error's you need to check, or just want a good software product, you need to unit test.

Some common failures that happen during the “Go” path testing are typically checked because they come up during regular development. But not all faults are checked. These off nominal paths are not easily checked with standard UUTs on a test set.

Some tools for more comprehensive testing or fault testing would be:

IVI drivers simulation mode

Other simulations (I.e. DAQmx in MAX with Simulated drivers)

Inserting error data.

Software tool code testing KlocWork

The easiest of these test methods is a Software Tool Code tester, such as KlocWork. This is because, after the tool is set up, you just run it and let it tell you about the potential (or certain) failures. I’m just starting to learn about KlocWork so I don’t know all the specifics at the moment. It appears to be able to capture a lot of the logic and path problems. It goes past the ability of LINT to verify standards and does checks along paths. I’m not sure how it works if you use LabWindows CVI functions or if that’s a non-problem.

Another way of testing is using simulation. Some easily available ways to do this are the simulation features in some IVI drivers, tools such as Agilent''s virtual rack, or with DAQmx and NI's Measurement &Automation Explorer (MAX). I haven't used virtual rack but it sounds like it will simulate instruments as if you were actually running tests with a rack of instruments. I don't know about the setup or operation of the, but the presentation I saw made it look like it could be useful. Virtual Rack IVI drivers

The simulation typically built into IVI drivers allow for instruments to be run in simulation mode. However, a more powerful simulation tool, at least for some NI instruments is the measurement explorer. For NI's DAQmx instruments, you can set up a simulated instrument and the test software operates as usual. The simulated instrument can set up to return various values, as needed. The main problem with this is that if an instrument is simulated using measurement explorer you have to go back to the measurement explorer to check it. In other words someone could take out a card and simulated an the software wouldn't know.

One brute force method of simulation is to comment out the call to and instrument driver and just set the return variable to a value. Without some software discipline, this can be dangerous. If one of these is left in the code then test won't return a valid answer. If this method is employed either a comment tag (I usually use //JAV) should be put in where the test is or a compiler directive should be used, like an #ifdef. I typically use this during developmental testing but I always make sure that, before the end of the day, all of these are out so that I don't forget about it before the next day.

My main point is to use tools that are readily available to make sure you put out the best product possible.

Thursday, May 1, 2008

Code and Unit Testing

Last time I talked about Unit Testing or external types of test. Now some thoughts on internal testing.

IDEs (Integrated Development Environments) using basic debugging techniques (i.e. single step, view variables, break points, etc) are the front line of internal testing. They give developers insight in to what's going on in a program. A program is run, it doesn't work, you debug. But there are other ways, more powerful ways, of doing debugging internal to a module that aren't as time consuming.

First, there is an ASSERT statement in most C/C++ languages. In NI's CVI in the toolbox it is a DoAssert statement (include toolbox.h to use this). The DoAssert statement is used to help find problems during development that don't happen that often. Basically, it is a condition passed to the DoAssert (Eample: i>1) and if the condition evaluates to TRUE, execution continues. If the statement evaluates to FALSE, module information is printed and execution stops. It prints out the module name a number like the line number (remember __LINE__ is the current line number in the program) and a message, typically with some debug information.

I use ASSERT or DoAssert (CVI) to check for out of tolerance conditions that happen once in a blue moon (good 'ol southern saying that means “not very often”).

Another way of internal testing is to do logging. Some people only use logging as a last resort after problems are found but I advocate putting in logging as your coding, putting log statements in at potential problem spots. Compiler directives can control whether the logging is executed or not. (#ifdef and #endif)

I have some logging routines laying around that I always use. They use the vaprintf style of functions so the logging is more like a printf statement in C. The LogOpen function is called at the start that opens the log file. A LogClose function is called at the end to stop the logging. It does I/O during execution by flushing the buffer every time the LogData is called but that can be controlled with compiler directives. The flush can be turned off if you don't want the I/O delays.

And, like I started with, there is always the standard debugging tools. These are just a couple of ways to do debugging internal to the module. I just want people to step out of their little box and think “How can I make my code better?” and “What can I do to keep from giving incorrect results or give errors?”

Sunday, April 27, 2008

Code and Unit Test

Test Engineers (TE) doing software need to have some software discipline. They don’t need the same discipline as tactical or man-rated software development needs, but Test Engineers do need software discipline none the less. TE's need to do some unit testing, some stress testing, some fault path testing, Diagnostics levels, some issue like that.

Many TEs use the excuses of “our software is different” or “Our software is to hardware intensive” to avoid code testing. I say any 10 year old can give excuses, what about results? What is the quality of your software?

As I see it there are two basic types of software testing, internal testing and external testing testing.

First the external testing or unit testing. In the software world there is quite often a test group. The software developers develop software and throw it over the wall to testers. In the Test Engineering world, there's not always that luxury. That's the reason to have software self discipline. There's more to external testing but for now I'm limiting myself to unit testing.

Definition: Unit Test is the testing of a module or a logically grouped set of modules where inputs and outputs, limits, stress, path test occurs.

To test a unit(s) it can be as simple as a function calling the unit(s) being tested over and over with various inputs and then checking the output. If NI's TestStand is in use then a sequence is set up to call the module(s) under test and the output checked.

One tool that I use quite often is called CUnit. It's a unit testing framework and there is a whole range of _Unit type of frameworks. JUnit for Java, CPPUnit for C++, xUnit for MS Visual stuff (I.e. NI Measurement Studio) and some others I can't think of right now. A lot of this can be found at SourceForge.org, a great source of open source software.

Since test software is quite often hardware intensive, sometimes some hardware simulation is needed, and I use the word “simulation” in the weakest form. It's as simple as creating a dummy routine to replace the hardware instrument driver that returns the data you want to test with. I.e. good data, erroneous data, stressed data, etc.

To test, the driver routine would call the module being tested. The module being tested calls the driver simulation which returns a predetermined set of results which allows the module being tested to return a predetermined result. If the returned result is really what you expect, the test worked. Otherwise, the module needs to be fixed.

Overall, the point I'm making is not to hack something out and throw it on the hardware. There are options, whether you've thought of them or not. By The Way, this isn't a comprehensive list of ways to unit test. Use your imagination, I know you have one, you're an Engineer!

I just want people to step out of their little box and think “How can I make my code better?” and “What can I do to keep from giving incorrect results or give errors?”

Note: Some of these test methods are the part of Agile development called TDD, Test Driven Development (TDD). It just means your code should be driven by the tests. I didn't want to scare anyone with the word "Agile."

Friday, April 18, 2008

Zephyr - test management tool

Zephyr is a complete test management system. It has collaboration tools, customizable dashboards for instant status, Test Desktops, with built in metrics, and test execution capability with defect tracking.

You can read about it's list of product features here and see an overview video here. There's also a limit person trial version here. It has some very good looking interfaces.

I have only read about the product and have not tried it out but it sounded like it had some very useful features. Since I have not actually used it I can only give you my impressions. Some things I liked were:

- Central repository for documents or at least links to the documents.

- Test/QA/Management Collaboration

- Instant update of test status

- It sounds like it was for Agile development with all the communication and collaboration, but I'm sure it can be used for any type of development.

The ability to manage widely distributed test systems is impressive and massively useful.

A couple of big questions, at least for me.

One, how well it plays with TestStand and other NI products. NI is the big dog on the block for test software and electronic, at least at Lockheed and to get a foothold it would need to complement our NI abilities.

Two, We have a lot of legacy code and equipment from NI that we don't want to lose. Since our previous tests cases are in TestStand written in CVI or LabVIEW, how well you can re-use your old tests? How well will it interact with the legacy code?

I'm probably download and try out the trial version but it would be in my spare time. Unfortunately, that wouldn't be until the end of the year.

All-in-all Zephyr looks like it has potential and I would like to hear from anyone who tries it.

Apologies

Things are settling into more of a routine so I should be able to blog more, but she does come first.

Thanks

Wednesday, March 26, 2008

Design Tools

There are a lot of simple design methods that can be done for a better test set. Just a simple flow chart to layout the design is good at a minimum. Something like MS Visio or any drawing program can used.

Another design tool is to do what is called swim lane charts. This is where each separate item (i.e. UUT, Test Set, Other Computer) gets a lane and every time something is required of that item, communication to or from that item, some process/decision/operation block is put in that lane. It shows the interaction between each of the different items and the operation each item does.

UML is another design tool, especially when doing object orienting programming. I read about UML but not done design with it so I don't have a good grasp of it. I know it's a way of displaying objects and showing the interaction between them. The software engineers tend to use UML for their designs.

State diagrams are also really useful for showing transitions between states.

My main thought on all of this is don't just code away with little or no fore thought. Use your tools for a better test set software design, it will work better in the long run and be more maintainable.

Monday, March 17, 2008

Test and a lack of understanding of software.

It’s absurd to think of test development in one dimension, the hardware dimension. If you’re involved with CMMI, Processes, etc. you’ll know that it all started with problems with software, or the mis-understanding of software and it's development. Manager’s didn’t know how software worked, or what it takes to develop it. Software practitioners were just “winging it” and learning by tribal knowledge. Projects were brought down by software problems.

Test engineers developing software for ATE need to at least come into 90’s and develop software using at least some standard software engineering approaches. Simple things such as keeping the requirements. Test requirements very often involve hardware and software and not exclusively one or the other. That’s fine, you still need to understand the software portion of the requirement.

The requirements need to be understood and traced up to any other higher requirement or derived requirement of something in the UUT that is required to test. This can be done in a tool, such as requisite pro or DOORS, or in an Excel spreadsheet. But you need to know what you’re testing.

Other tools that can help is a tool called Requirements Gateway by NI. I haven't used it personally, but it seems like a useful tool.

More test tool stuff to come

Monday, March 10, 2008

Test Engneers

Since most TE’s are out of EE the software portion of ATE is “hacked” out (the old-school meaning of hack, to bang out code until it works) the code is less than optimal. In other-words, it works for the normal case and little else.

Some people who have software degrees or have been doing software for a long time work toward having more software discipline in there ATE code, but it still tends to be lacking in most area’s. Software tools tend to go unused or limited use, some believe an IDE is all that is needed.

There’s more to developing software than just an IDE and some time. Tools are available to help develop code. I think software tools aren’t used in test due to a lack of understanding, lack of knowledge, and lack of patience.

Sunday, March 2, 2008

Time to come into the 90s

While real-life has gone on personally, work-life has continued, too, since I like to eat and live indoors. One of my biggest pet-peeves that has been affecting me is people are stuck in "the way we've always done it." It's really just fear, the new way of doing things may affect their job, may affect the bottom line, they may not really understand new ways of doing things.

Have you ever thought where we'd be without evolution? Whether you believe in Creation or Evolution (I personally believe in Creation but we Evolved from there) businesses, business practices, business methods evolve. That's why some business that don't change with the times die-off (ex. Braniff air-lines and Remington Rand Typewriter company) and others change, evolve and continue to thrive (IBM and Harley Davidson Motorcycle company). People in all businesses need to learn to change and evolve or die off. People within the business need to evolve to keep the businesses evolving.

The people who are stuck in the 70s and 80s need to come into the 90s or their businesses will stay in the 70s and 80s. They need to change but I wouldn't want to push them to fast by trying to get them to come into the 2000s.

They need to evolve, try to get up to date in their thinking,

New ideas need to be tried, at least ideas like Object Oriented Programing (OK, that's not new but it is to them), Agile programming, using ASICs and FPGAs. At least get past vacuum tubes and transistors. All this is not magic, it's just evolution, or coming into the 90s from whatever decade you're stuck in.

It is a disservice to the people who work for the less evolved, too. If you want to compete in this world you at least need to be involved in this world.

I don't know how to get these people up to date, or even close to up to date, but for the businesses sake and their sake, it needs to happen.